Virtualization and Virtual Infrastructure

Virtualization is the “separation of a resource or request for a service from the underlying physical delivery of that service” (Virtualization Overview, 2006). Scheffy (2007) argues that resource separation allows for more than two virtual computing environments to execute on a single piece of hardware by running multiple operating systems and applications on the same platform. Krutz and Vines (2010) affirm the sharing of services and the consolidation effect on the provision of services over a network to be a key component in characterizing virtualization. Analytically, therefore, virtualization is a cost-effective strategy to optimize software and hardware resource utilization. Scheffy (2007) exemplifies a typical virtualization environment to be one that allows both the Windows virtual machine and Linux virtual machine to optimize the use of a single piece of hardware or on a single workstation.

Technically, in the views of Scheffy (2007), a virtualization environment decouples users from the hardware and software characteristics of the host computing environment. That, according to Krutz and Vines (2010) allows for resources to be dynamically pooled together for the purpose of providing computing services on a single platform as mentioned above. In addition to that, virtualization allows for the integration of various services into the cloud paradigm allowing organizations such as Google to benefit from the pay-per-use strategy. Another example typical of a virtualization environment is virtual memory. Typically, the environment is driven by a swapping mechanism that allows for the movement of data to disk storage while programs execute in the background using the physically available memory.

A blend of virtual infrastructure often referred to as virtualization technologies allow for IT infrastructure to accommodate virtualization at all levels of the network, operating systems, servers, and storage. In addition to that, the essential requirement of software and hardware abstraction can also be achieved through the virtualization mechanism (Virtualization Overview, 2006).

Because of the dynamism characterizing evolving organizational needs, it is important to detail the virtualization environment from the hardware and software point of view as a decoupled system (Virtualization Overview, 2006). Therefore, the decoupled system leverages the infrastructure in meeting organizational computing needs and the rationale to invest in the virtualized IT infrastructure. Typically, that overly crystallizes the technical aspects of a virtualized environment and leverages the shift from a non-virtualized to a virtualized environment.

Non-virtualized and virtualized environment

Virtualization Overview (2006) provides the essential features typical of a new environment when shifting from a non-virtualized to a virtualized environment. The following table compares a non-virtualized and a virtualized environment.

Virtualization Overview (2006)

Benefits of Virtualization

Virtualization allows for simplified system upgrades, enables users to capture and own different occurrences of virtual machines, and allows users to own the entire migration process from the old to the new virtualized environment (Scheffy, 2007).

A virtualized environment allows for efficient usage of energy and optimal use of memory. Processor efficiency, speed, and storage are more granularly configured with the use of dynamic virtual machines (Scheffy, 2007). Users, therefore, can optimize the level of resources deployment in an efficient and cost effective approach (Scheffy, 2007).

On the other hand, organizations are usually limited to the physical boundaries of data centers for example servers. Virtualization isolates the limitation of an organization’s IT infrastructure by mapping the physical computing environment to a logical environment. Typically, therefore, the virtual environment allows for the limited resource utilization where the software sees the physical resources to run on. That saves the hardware and software without extra consumption of energy when responding to user and other operational requests. A typical example is illustrated below where the virtual environment is built on the concept of work load performance.

Virtualization allows for efficient deployment of additional infrastructure such as data servers, usage on agreements based on server levels, is fault tolerant, allows for economies of scale, and allows for virtual machine mobility, data, and servers.

VMWARE

One of the organizations that have sanguinely pursued innovation in the virtualization environment is VMware. Being a leading innovator in the market, VMware pioneered x86-based virtual technology of in the 1998 that lead to the innovative provision of hardware and software for the virtual environment in the market (Scheffy, 2007).

Typical characteristics of the VMware technology are that its virtual machine allows for the implementation on software if a uniform hardware image created by the virtual machine. The uniform image created by the virtual machine provides a platform for guest operating systems and other applications to run on the virtual environment. Typically, the environment is managed by the VMware’s VirtualCenter. That ensures that workload balance is consolidated across servers (Scheffy, 2007).

A detailed examination of the VMware’s VirtualCenter machine is that it provides an infrastructure where hardware and software resources are pooled into a single unit that is administered from one point. However, the virtualized architecture allows for different operating systems running form the single machine to provide services to different user demands and organizational requests (Scheffy, 2007).

In its dynamically innovative approach, VMware has evolved savvy technologies such as VMotion technology. The technology allows the virtual machines to be moved from one physical machine to another without disrupting services offered by the virtual machine. The movement occurs while the VMachine reconfigures the new virtual environment while services are being provided by the VMachine (Scheffy, 2007).

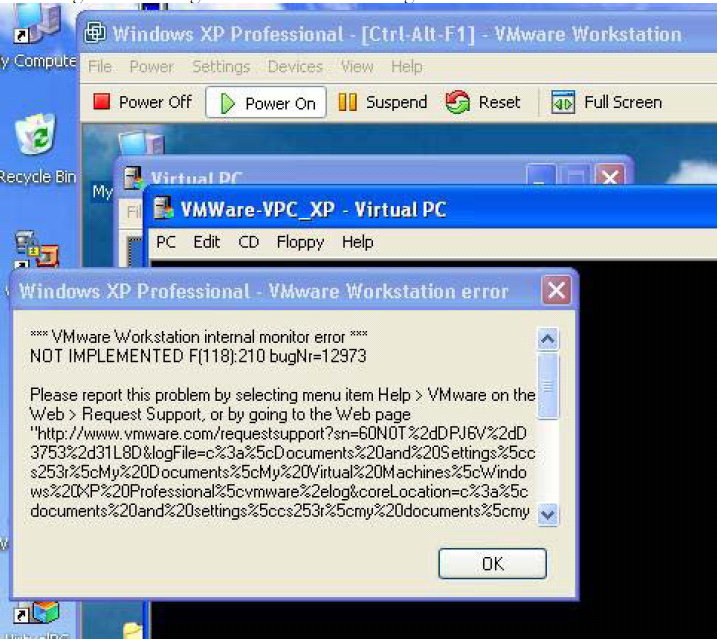

Virtual PC

Jain (2007) observes that a virtual PC is basically a replica of the 32-bit x86-based with variations only in the processor while other hardware components are basically similar to the 32-bit x86-based PC. VirtualPC install well on the VMware as is illustrated below.

The mentioned processor is basically virtualized for the virtual PC. However architecture of the virtual PC allows users to take complete control of the PC in virtualized approach. The guest hardware allows the guest operating system and to be installed on it. Users take control of the virtual PC by use of a virtual PC tool bar Host-keys. Disk and other memory management schemes are implemented through a combination of techniques such as the use of a virtual disk wizard. Jain (2007) provides further detailed discussions Virtual PCs.

Redhat Virtualization

In a typical virtualization environment, virtual machines create a configured environment to allow applications and guest operating systems to operate on larger than available physical resources such as memory and hardware. Therefore, the virtualized environment should be designed with the architecture that accommodates the virtual environment. Typically, redhat virtualization exemplifies a conceptual framework of the archtecture (Redhat, 2007).

The architecture is a composition of different software layers with the operational functionality based on the Virtualization component of the Red Hat. The environment is designed to accommodate multiple operating systems with each guest operating system uniquely configured to run its own domain. The virtualization environment optimizes the usage of the physical CPU through the virtualization mechanism that allows Red Hat to create a virtual CPU where the guest operating systems run.

Red Hat virtualization can be deployed from two of its existing options. However, the deployment should be unique to security and performance needs. The document, Redhat (2007) provides a detailed discussion of the aspects of Red Hat virtualization and including configuration, migration, hardware, management of the virtual machines, among other issues (Krutz & Vines, 2010).

Worthy of mentioning is the domain security level that can be provided by the Red Hat virtualization environment. When working on the environment, security is of critical concern and can be created in the Red Hat environment as exemplified below (Krutz & Vines, 2010).

Security Issues

A Domain Security Label can be created using xm as follows:

“xm addlabel [labelname] [domain-id] [configfile] “ (Redhat, 2007). On the other hand, Domain Resources on the Red Hat virtualization environment can be tested as allowing for the security level of each resource to be investigated and if need be reconfigured. “xm dry-run [configfile]” (Redhat, 2007). However, the configuration information is just a mention of several configuration and tasks and other operations that can be performed on the Red Hat virtualization environment.

On the other hand, virtualization environments come with security issues and risks allowing security professional to innovatively conjure typical approaches to addressing the issues. The ephemeral nature of data and information security and integrity provide further emphasis on security issues (Krutz & Vines, 2010).

Issues that the virtualization environment needs to address include software dependability. Dependability characterizes software that executes with a high degree of predictability under all operating environments. It should maintain its integrity without throwing exceptions by ignoring error conditions likely to be encountered or detected particularly in a server environment (Krutz & Vines, 2010).

Another security issue is trustworthiness. Organizations need to trust the software solely because of the sensitive nature of data and information stored in the cloud and have the confidence that the software is not vulnerable to malicious attacks. The software should be resilient and recover in real time from undesirable attacks from intruders (Krutz & Vines, 2010).

The level of confidentiality should be high limit any intentional or unintentional attacks, to ward off covert channels, able to analyze traffic volumes for confidentiality purposes, ensure database security and integrity is maintained, and provide encryption abilities to ensure secure data and information transmissions on insecure environments (Krutz & Vines, 2010).

No modification, as a matter of data and information integrity should be done of data, resources and data should be made available should be enforced to allow the cloud security to authenticate, audit, and ensure secure data transmission.

Why Companies Are Moving Towards Virtualization Computing

Virtualization has been identified by companies to be the way forward in an endeavor to optimize the use of resources to fully fulfill organizational business objectives. Typically, contemporary approaches to software and hardware resource usage has been identified to be a more costly and inefficient approach. In addition to that, the old architectural approach of organizing layers of hardware and software adds to the inefficiency of the systems. Thus, a better way to address organizational computing needs in an efficient and cost effective manner has been the leveraging point for organization in adapting to the cloud computing environment (Lorincz, Redwine,& Sheh, 2003).

Among facts that have allowed organizations to move towards adapting Cloud computing are the fundamental characteristics of cloud computing that are leveraged by the benefits discussed above. The five elemental characteristics of cloud computing include ubiquitous network access, a high degree of elasticity, location independence, measured services, resource pooling, and Ubiquitous network access to (Krutz & Vines, 2010).

Cloud computing can be modeled after the private cloud model, public cloud, hybrid cloud, and community cloud. Typically the private cloud can be owned by an organization or leased to other users. On the other hand, these clouds can be owned either privately or by the public (Krutz & Vines, 2010).

The value proposition that allows organizations to move towards virtualization includes scalability. Scalability allows IT infrastructure to meet any arising demand performance and business demands. Krutz and Vines (2010) observe that when computing needs to an organization exceed the capacity of its computing resources, managers find a virtualized computing environment as the best option to fulfill its computing objectives. It implies that the cloud computing environment can be dynamically virtualized to allow for high levels of redundancy ensuring reliability and high levels of availability (Krutz & Vines, 2010).

Another driving factor for organizations in shifting to the virtualized environment is the benefit that comes with improved business processes. Organizations eye the benefit of customers and suppliers for sharing data and applications without the need to focus on the computing infrastructure (Krutz & Vines, 2010).

Organizations find start up costs too high when implementing organizational IT infrastructure, particularly when starting up. In addition to that, organizations find it costly to implement IT infrastructure on emerging markets and other stat up capital when the need for advanced computing needs. Starting organizations can use already available IT infrastructure, but the need to build a data center can be prohibitively expensive. These costs include procurement costs of new hardware and software and the cost of hiring technical personnel to administer data centers impels organizations towards adapting cloud computing in preference to other technologies.

The use of cloud computing facilities allows users to use the cloud resources without the need to interact the hardware resources without the need to manage the resources. In addition to that, the computing environment provides high data communication bandwidth that provides low cost computing. That ensures high levels of resource utilization and accommodates a large pool of users further minimizing the service costs (Scheffy, 2007).

Typically, cloud computing is a high performance computing that is commercially viable and of low cost. Examples of cloud computing include Amazon Web Services, VMware company, and Microsoft Azure Services among others. VMware provides one of the most dynamic innovations in virtualization technologies.

References

Jain, S. (2007). Microsoft Virtual PC 2007 Technical Reference.

Krutz, R.L. & Vines, R.D. (2010). Cloud Security. A comprehensive Guide to Secure Cloud Computing. Wiley Publishing, Inc., New York.

Lorincz, K., Redwine, K. & Sheh, A. (2003).Stacking Virtual Machines – VMware and VirtualPC. Web.

Scheffy, C. (2007). Virtualization for Dummies. Web.

Redhat. (2007). Virtualization Guide: Red Hat Virtualization.

Virtualization Overview. (2006). White Paper.