Abstract

This paper presents a proposal for a model of IT infrastructure which is tailored to enable a business establishment better service its clientele base and enhance its administrative and communication as well operational network.

The thrust of this business is to provide advance rental training facilities offer many services to students and instructors. The company has a large clientele database which includes some example client such as Oracle, MySQL, Mathworks, VMware and various others. The company has been steadily growing over the years, and now has about 13 facilities across US. At the end of each month facility managers have to send reports and data by emails and paperwork to the CIOs.

The Execute Brands now wants to establish a new HR that can provide securing and centralizing network design that can help facilities securely communicates with new HR better. This paper presents a proposal that will help in the accomplishment of the above purposes. The proposal details the IT infrastructure as well the components that include Hardware, software, devices, etc.

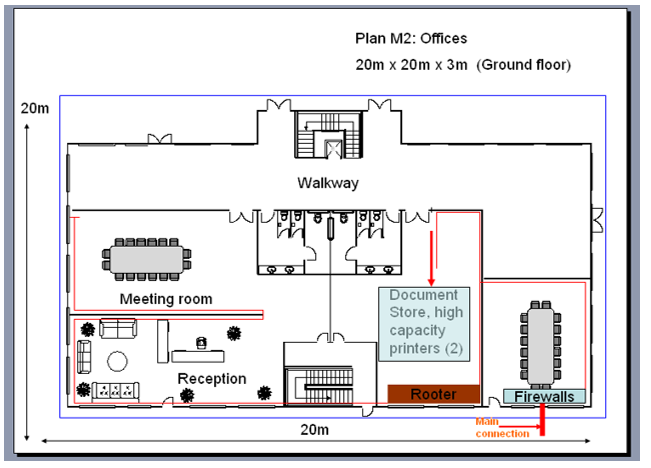

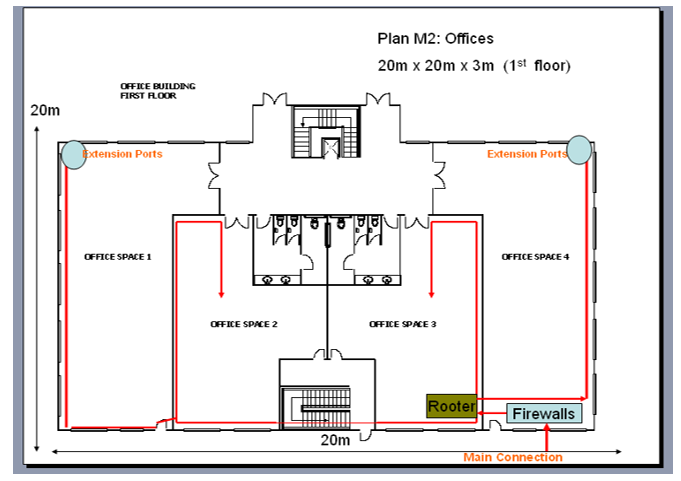

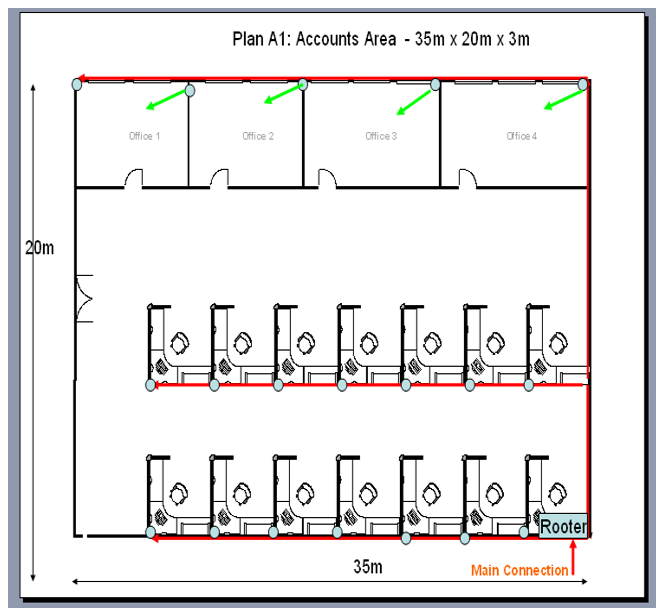

IT Infrastructure lay-out

Diagrams

Infrastructure Description

Components

The following is a description of IT component that have been entailed in eth assembling of an IT infrastructure for a new HR that can provide securing and centralizing network design. The design is made to help the existing company facilities securely communicate with new HR better.

1-IP address allocation, DHCP and DNS server

The IP addresses allocation in the new IT infrastructure model for the Head Quarters (HQ) HQ is based on the guidelines and policies of the IANA and ARIN in relation to IP addresses allocation as well as the routing of IP addresses. The system will make use of IPv4 (32-bit addressing). The customers of HQ will be leased IP addresses. In the new building IT model will constitute non-portable. All data points in the IT infrastructure will be allocated one IP address each. Additional IP addresses will be provided is need be dependent on the magnitude of need. The IP addresses of set of users of the HR system will be allocated dynamically.

The Designated network administrators will assign various a ranges of IP addresses to DHCP. The system will enable that each client computer or LAN in the entire HQ infrastructure to have its IP software configured to request an IP addresses from the DHCP server at network initialisation. The DHCP server to will reclaim as well as be able to relocate IP addresses that are not renewed and thus enabling the dynamic use of IP of addresses.

The DNS server will feature network IP address and hold a database of network names as well as address for the entirety of the HQ internet’s hosts. The IP addresses will dynamically allocated to key network clients especially for operational purposes. The Dynamic Host configuration (DCHP) will be used to reduce workload on the part of the HQ administrators. Since there will devices system dependent upon the It model, the use of dynamic IP addressing as dynamic addressing will allow a share a limited address on a space on a network since the use of the HQ model will initial the sue of the system by a particular set of users at one point or another.

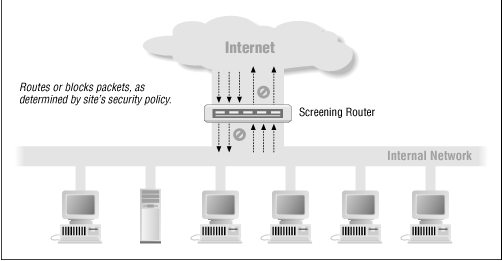

Packet filtering

Packet filters will be installed to actualise or block packets in the course o channelling them across networks. This will be a handy devise especially in the proposed IT infrastructure models where there will be some integration between the internet and local network systems. The devise actually facilitates flow of data interaction from the Internet to an internal network, and vice versa. To set up packet filtering the presented HQ IT model will entail as set up of a set of rules that define what types of packets for instance those to or from a particular IP address or port are to be passed and what forms are meant to be blocked. The Packet filtering set up in the presented design will obtain in the Cisco router.

The Packet filtering model installed will route packets through internal and external hosts. This will be done extensively and each IP address will be catered to for the optimisation of security reasons. In the presented IT model the filtering system will allow or block specific forms of packets in a way that reflects a HQ’s site’s own security policy as shown in the diagram below. The system will use the screening router firewall.

In the proposed IT infrastructure the Packet Filtering will ensure that every packet has a set of headers containing particular information. The main information is:

- IP basis address

- IP target address

- Protocol ( UDP, or ICMP packet)

- TCP or UDP source port

- TCP or UDP target port

- ICMP communication type

Servers and services

The project developers have had to make a decision to select the feasible and the best data storage and warehousing model. A choice has been made between long time rivals Oracle and SQL server database technologies.

Connolly, Thomas et al (2003) contributions hold that in sharp contrast to other typical vender solutions, the Oracle model has been in the forefront of meeting industry needs providing full scale support for all industry standards in the broad spectrum of operating systems and hardware infrastructures in contemporary IT domains. Owing to its cross-platform (OS) portability the Oracle model provides a formidable alternative for entities to make decision on what operating hardware they would prefer to use without having to face the hassle of attempting to surmount the hurdle posed the disenabling SQL server model which is tailored for Windows platforms exclusively.

Lightstone S et al (2007) note that any organisation has the privilege to depend on Oracle technology to reduce deployment expenses while also remaining flexible enough to meet future needs. This is particularly so as the choice for Oracle technological database systems will not tie anyone to the specific hardware or operating system infrastructure. The scholars state that this is particularly important for independent software vendors who have the privilege to set up an Oracle database once and then deploy anywhere they would wish to.

Lightstone S et al (2007) experimentations have indicated that the SQL server technological systems have limited support for the variety of hardware platforms that exist in IT domains. The researches indicate that SQL server supports fewer hardware applications in comparison to Oracle. Oracle is compatible with all major hardware environments as well as operating programmes. The scholars present that Oracle technology supports platforms in various categories which enlist ERP, CRM as well as the Procurement and Supply chain. They further observe that there are by far a larger number of more packaged softwares deployed on Oracle than those in the 2000 SQL server system.

Oracle is considered as a technical innovation leader in the key data warehousing domains. Gray J and Reuter (2005) note that Oracle has transformed the technology terrain of business intelligence servers. Oracle technology addresses the entirety of the server-side business intelligence as well as data storage needs. This by extension includes the components of extraction, transformation and loading (Gray, J. and Reuter 2005). Further to that the business intelligence server merits of Oracle technology extend to the Online Analytical Processing (OLAP) and retrieving realms. One handy thing about Oracle database technology is that it eradicates the need for the running of many engines in the business intelligence landscape.

The HQ IT infrastructure will tap in the merit that comes with use of Oracle technology in the aspects of rapid deployments which will be used to eliminate the requirement to combine various sever units when running the HQ business intelligence system. This is also expected to reduce management costs. (Gray J and Reuter 2005) While SQL Server 2000 functions as data storage facility, OLAP evaluations are conducted in an external data repository. The problem with this is that it will require additional time for the retrieving of data.

The Domain Control Server

The HQ cobweb will utilise the Windows NT Domain as a principal domain regulator. The server will be useful for its backup domain controllers as the company handles bundles of sensitive and critical data that demands optimal security measure as well as back up. The Primary Domain Controller that holds the SAM will be used to authenticate access requests from research centres and all office work. The server has a valuable SAM security Accounts manger which will be used to mange the database of usernames, passwords and permission. The SAM for the HQ model will remain a component of the domain control server.

The domain control server SAM unit will be used to store passwords of users, researchers, customers and officer of the entry of HQ personnel. The unit will store the password in a hashed format. This is one way of reinforcing security measures of the HQ system. TO weather the possibilities of suffering offline attack the developers have considered the facilities of MS SYSKEY facility in Windows NT 4.0

File server

The HQ model will use Window Server 2003 for file storage. The merits of the model come with delight of the Distributed File System (DFS) technologies which offer a broad spectrum of user friendly replication together with simple and fault tolerant access to geographically scattered files.

The file and storage services unit is critically needed fro efficient backup of user and al networking data, the restoration of operation as well as an enhanced encrypting system which will be handy for HQ in its prospect of cutting costs while boosting productivity.

Email server

The designed HR system will make use of the hMailServer. The server has been selected for its no-cost aspect that caters to the prospects of cost cutting. The server will also provide administration tools for the management and handling as well as back up of all email related data for the entirety of the HQ model system users. The model has been selected specifically in consideration of its guaranteed support IMAP, POP# and SMTP email protocol.

Web & Proxy server

The system will make use of Squid will be used as the web proxy server. While functioning to serve as web cache, the server will be valuable for providing means to block access to particular malicious URLs in and thus will provide critical information filtering for all web access by the staff and researchers as well as customers serviced by HQ.

Firewall

Firewalls have become part of the best known security solution in IT whilst their popularity continues of grow as they play a critical role in information security. Nonetheless the infrastructure of security firewalls will have to be leveraged on effective and feasible security planning and a well laid out security policy. The best of firewalls can be obtained when they work in hand in glove with effective and up to date anti-virus software and a broad range of intrusion detection systems. The resources by the two authors zero in on the dynamics s and dimensions of firewalls in the precinct of the all the associative elements in the exploration of the domains that deal with IT security. HQ will make use of Firewalls to protect the data warehouse and the entirety of the communication networks from possible attacks.

Firewalls will be used together with VPNs. VPNs as the commonly used protection mechanisms for information systems working in concert with other associative models such as well as access controls, and firewalls and antivirus tools. The control of VPNs will be implemented in a layered manner which will facilitate the provision of defence-in-depth; The logic being that no one control is 100% effective so by layering these defences, the controls as a unit will be more effective and efficient.

6-VoIP

The HQ model has been modeled in tandem with the VoIP systems which will functions with platforms with conventional public switched telephone network (PSTN) in order to enable traceable telephonic communications the world over. The VoIP will be particularly useful for the HQ enterprise looking for cost cutting models. The system will be valuable for the communication needed by HQ for its officers and field officers as well as providing customer support at low costs.

The Infrastructure-Cloud Computing

Cloud computing is web based system design which entails intensive use of computer technology (computing). The concept is a business information management model of computing in which typically real time scalable IT resources are offered as service over the internet to system front end user who do not, may not need to have knowledge of it or expertise as well as control over the technology infrastructure In the so called ” cloud” in which the services are premised and supported. The cloud computing encompasses software as a service (SaaS) interactive and dynamic Web 2.0 as well as other emergent IT technological models.

The underlying theme governing the development and implementations of cloud computing is the dependence on the internet for satisfying the computing needs of the user. One illustrious example of the forging is Google Apps, a facility that provides common business applications online available and accessed through a web browser like internet Explorer or Mozilla Firefox.

Since the company provides various training rental and facilities to students and tutors, through various IT clients such as Oracle and MY SQL etc, the company has to consider the merits of a cloud computing system as there will much collaboration with major players like IBM , Google and yahoo as well Amazon for the provision of particular services to the students and instructors. The growth of the cloud-computing concept has been boosted by the collaboration between IT giants IBM and Google. The cloud conceptual landscapes also welcome the entrance of web research resource giant Wikipedia. IBM also followed the Google-IBM efforts and announced the cloud computing related concept o Blue Cloud. This will be feasible system for the HR.

SWOT Analysis

Strengths and Opportunities

The Cloud Computing system of the new HR comes with remarkable operation efficiency that can be realised by large and small organisations. From another consideration server consolidation as well as virtualisation rollouts are set up to run in tandem with cloud computing systems.

Weakness and Threats

The cloud computing concept of the new HR is dogged by typical geopolitical dilemmas as those that dog the surge of the internet. The Cloud (internet) spans across borders and represents a pellucid form of globalisation. The challenge they face many cloud computing service providers is that these have to fulfil a plethora of regulatory environments if they have to deliver their services repertoire in the dissonant and diverse global market.

Efforts to harmonise the regulatory have hit a snag often. The US-EU harbour was one such an attempt which is used by providers such as Amazon Web Services commencing in 2009 to cater to major markets enlisting the United States and the European Union by generating local infrastructure and enabling customers to select availability zones. Even at the outlined levels there are limitations in the regards of the aspects of security and privacy on the part of the individual users through t government level. One salient weakness of the cloud computing conceptual models is on the data security; data encryption aspect

Security and Risk Mitigation Matters

Companies and end user providing and making use of the cloud computing service delivery concepts have to follow provided and available guidelines to avoid data loss and the compromise of their private data. Technology analyst and Information security consultancy firm Gartner provides seven security issues that must be discussed and clarified with a cloud computing vendor. The users of the new HR system will have to pay attention o the following

- Privileged user access on this aspect the ensure must enquire about who has special access to data as well as enquire about the hiring as well as the management of such administrators

- Regulatory compliance-In the second aspect users must ensure that a vendor is willing to go through external audits and or security certifications

- Data location- On the third dimension of data location the firm advises users to ask if a provider allows for any control over the location and storage of data

- Data Segregation- On this aspect user must ensure that encryption is available through all service levels as well as that these encryption schemes were modeled and tested by experienced experts.

- Recovery-On the recovery dynamic user must establish what may happen to their data should disaster strike, on this dynamic users have to ascertain if in cases of disaster there are data restoration systems in place and also establish how the data restoration process is expected to take.

- Investigative Support- On the investigative support aspect users have to enquire about vendor ability and privilege to probe any suspected or inappropriate or illegal activity.

- Long Term viability- On the last dimension which regards long term viability users have to establish what is likely to happen to data if the company goes out of business as well as how the data will be restored as well as in format it will be restored in.

The project developers are also advising that in practice it is feasible or a user to establish data recovery capabilities and functionalities by experimentations. These may also be augmented by efforts to of asking to receive back old data and the establishing how long the fulfilling of that request would take and also verifying that the checksums are corresponding with the original data.

Virtual Private Networks (VPNs) are implementations of cryptographic technology. VPNs are private as well as highly secure network connection across IT infrastructures and systems. The IT specialist quotes The Virtual Private Network Consortium’s (VPNC) definition of VPN as a private data network which make use of the tunnelling protocol as well as security procedures. The popularity of VPNs comes from their feasibility in securely extending an entity’s internal network connections to various remote locations beyond the trusted network.

Whitman, Michael et al (2009) furnish on an in-depth prologue to firewalls that focuses on both the managerial and technical dynamics of the critical area of data security in Cloud computing landscapes. The scholars delineate that VPNs are temporary encrypted and authenticated connections that enable someone in the exterior setting (in a remote setting) to access resources and data on the inside cloud computing network.

Whitman, Michael (2009) underscores the relationship between risk management and information security. The authors state that on the aspect of risk management there are four strategies from which strategists may choose from. These according to the scholars enlist avoidance, transference, mitigation and acceptance. In the conceptual premise within which the authors write, avoidance is that kind of strategy that attempts to avert an exploitation or manipulation of a loophole or vulnerability by a noted or identified threat or threat agent.( for instance, a hacker programme or virus.) from another perspective the ultimate objectives of transference is to move the risk factor to another organisation such as an internet service provider , insurance practitioner or any other third party of the cloud computing service which will should the risk factor.

Mitigations from another angle characterise the kind of an approach where in there is focus on the merits of reducing the potential damage that can result from manipulation or exploitation of an establishable loophole or vulnerability through the aspects of adequate planning and preparation. The cited scholars have noted that mitigation has become one of the most popular strategies used given the fact that risk is inherent with contemporary networks and technology designs. The last paradigm, acceptance entails doing nothing about the loopholes identified or any vulnerabilities noted. The grave aspect of the acceptance dynamics is the further acceptance of the potentially outcomes of manipulation or exploitation.

Craig Thomson (1998) underscores that the employ of protection mechanisms is a central part of ensuring that data and any important information is secure. The authors present the merits of VPNs as the commonly used protection mechanisms for information systems working in concert with other associative models such as well as access controls, and firewalls and antivirus tools. What the writers also underscore is that in many cases the controls such as VPNs are implemented in a layered manner in cloud computing networks to facilitate the provision of what is commonly known as defence-in-depth; ” The logic being that no one control is 100% effective so by layering these defenses, the controls as a unit are more effective and efficient”.

The scholars also make a note about the cost implications of considering the implementation of the control measure stating that administrators must perform a cost benefit analysis to assess the economic feasibility of such control measures.

Whitman and Mattord note that the concert functionalities of information security models and modeling. The authors enunciate that Intrusions Detection Systems (IDS) function like burglar alarms and produce an alert or notification if an attack against a cloud network system obtains. According to the scholars, scanning and evaluation tolls are used to conduct vulnerability evaluations as a way of identifying areas of concern within a specific cloud computing IT infrastructure.

In system that are classified as passive or normative, the intrusion detection system sensor detects a potential security compromise submits the information to a log. After this the system then sends a signal to alert the console and the owner. In reactive system models which are also known as intrusion prevention system (IPS), the IDS responds to the potential threat activity by means of resetting connection or link and sometimes this accomplished by way of reprogramming the firewall to gag network traffic flow from the suspected dangerous origins.

Some IDE systems are automated whilst others are triggered into operation at the command of a designated operator. IDS analyses suspected attacks and is functional for detecting threat that originate from within the system. Sebring, Michael (2003) concurs that this is done through an examination of cloud computing infrastructure network communications termed identifying heuristics and patterns of typical network threats and subsequently acting reactively to transmit a signal to operators on the threat activity. The new HR model will also entail the merits of ID in certain components of its design

The Cloud Computing Components

Application

In cloud computing frameworks a cloud application premises and leverages the cloud (The web interface) in software architecture in a way of eliminating the need to install and operate the application on the user’s or customer’s own computer. This diminished the burden of software access, maintenance, upgrades, ongoing operations as well as support. Examples of the foregoing are;

Peer to Peer/ volunteer computing (Bittorent, BOINC Projects, ad Skype), Web Application such as Facebook, The use of software as a service such as Google Apps and Salesforce, the use of software with service such as Microsoft online services.

Client

A Cloud computing client is computer hardware or computer software that has been set to rely on the cloud (The web or internet interface) for application delivery. The hardware can also be specifically tailored to deliver loud services which in each case will non-functional without it. For the new HR system the cloud computing clients will be ;

The Mobile Android, iPhone and Windows Mobile , Thin client which enlist the CharryPal, Zombu, gOS-based systems and the Thick client such as Web browser, Google Chrome and Mozilla Firefox. These will be made available to the students and instruction through the formidable cloud computing system.

Infrastructure

Cloud Infrastructure denotes a typically platform virtualisation environment that functions as a service. Examples of these are; GoGrid and Skytap which belong to the category of full virtualisation, Sun Grid which belongs to the category of Grid Computing, RightScale which is categorised as a management infrastructure and Amazon Elastic Compute Cloud categorised as Compute Infrastructures.

Platform

A Cloud Computing Platform denotes platform as a service denoting the delivery of computing platform or by extension a solution stack as a service. The primary purpose of the platform is the deployment of applications with no cost and complexity of purchasing as well managing the underlying hardware and software layers. For the new HR model the key platforms will be Web Application Frameworks, Ajax, The Google App Engine of Python Django and the Heroku done on Ruby on Rails, etc.

Service

Cloud Computing Service in the form of services such as web service is a software system modelled to support interoperable machine-to-machine activity over a network. The network and thus the cloud service can be accessed by external computing elements such as software.

Provider

These are those who ensure the real time provision of real-time cloud computing networks to users. The provision of the services to third party user requires an overwhelming collection of resources as well as expertise in building and managing the downline of systems and applications for various data resources.

Users

Cloud computing users are viewed as the integral quality drivers who possess constraining power on value of IT solution. The essence and value of a solution relies largely on the perspective that is has of its end-user essentials as well as user categories. For the new HR model the users are mostly the students and the tutors as well admin and other operations staff who will make use of the system for learning, research, communications, purposes, etc.

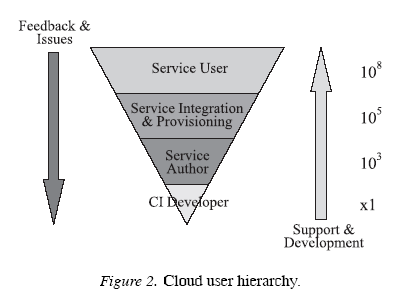

The diagram above illustrates Cloud Computing User Hierarchy.

In simpler terms a user is a consumer cloud computing. One of keys areas of interest in contemporary cloud computing relates to the security of end user. The diagram illustrates four expanse categories of non exclusive user categories. These include system or system or cyber infrastructure, user categories as well as the CI who are system developers, authors of different component services and principal applications. The user categories also include technology and domain personnel who bring together the main services to complex service structures and their systemic designs.

These will deliver to the front end-users( the students a, tutors and the admin) The user category in cloud computing also enlists the user of the simple and elementary as well as composite services. By extension the user category will also enlist the domain specific sets of which will be working in expanse environment pitting indirect user groups such as policy makers and other groups of stakeholders.

References

Whitman, Michael, and Herbert Mattord. Principles of Information Secuirty. Canada: Thomson, 2009. Pages 290 & 301.

Anderson, Ross. Security Engineering. New York: Wiley, 2001. Pages 387-388.

Anderson, James P., “Computer Security Threat Monitoring and Surveillance,” Washing, PA, James P. Anderson Co., 1980.

Denning, Dorothy E., “An Intrusion Detection Model,” Proceedings of the Seventh IEEE Symposium on Security and Privacy, 1986, pages 119-131.

Lunt, Teresa F., “IDES: An Intelligent System for Detecting Intruders,” Proceedings of the Symposium on Computer Security; Threats, and Countermeasures; Rome, Italy, 1990, pages 110-121.

Lunt, Teresa F., “Detecting Intruders in Computer Systems,” 1993 Conference on Auditing and Computer Technology, SRI International.

Sebring, Michael M., and Whitehurst, R. Alan., “Expert Systems in Intrusion Detection: A Case Study,” The 11th National Computer Security Conference, 2003.