Introduction

The given literature review will primarily focus on the topic of Web Application Performance Tool (WAPT), which is utilized in order to test web-based interfaces and related applications. Aside from general background, this literature review contains sections of load testing and stress testing comparisons and the section discussing gaps in knowledge identified based on the findings of different studies. The primary use of WAPT is to analyze the web tools’ performance capabilities and metrics, such as stress and load. WAPT mostly targets web interfaces, web servers, web APIs, and general websites (Kar & Corcoran, 2019). It is stated that “web applications are defined as the applications that use a Web browser to fulfill the requirements of users and are written in browser compatible programming languages (such as HTML, JavaScript, and CSS)” (Jailia et al., 2018, p. 239). The current literature comprehensively focuses on specific WAPT categories, such as web application performance evaluation tool GazeVisual, which operates by conducting visual, statistical, and quantitative analysis of web interfaces (Kar & Corcoran, 2019). Therefore, web applications require systematic testing in order to track their performance fluctuation to reciprocally adjust the procedural systems.

Firstly, it should be noted that load testing is the determination or collection of performance indicators and response time of a software and hardware system or device in response to an external request in order to establish compliance with the requirements for this system. According to the current literature, load testing is usually done to evaluate the behavior of an application under a given expected load (Jailia et al., 2018). This load can be, for example, the expected number of concurrent users of the application, and in the case of monitoring systems, this is the number of people connected to the system making a given number of transactions in a time interval.

Secondly, stability testing is done to ensure that the application can withstand the expected load for a long time. According to the literature, it is stated that “the tests also demonstrate how the GazeVisual software can quantitatively show the difference in the quality of gaze data from the different consumer eye trackers, operating on different platforms, e.g., desktop, tablet, and head-mounted setups” (Kar & Corcoran, 2019, p. 298). This type of testing monitors an application’s memory consumption to identify potential leaks. In addition, such testing reveals degradation of performance, which is expressed in a decrease in the speed of information processing and an increase in the response time of the application after a long time compared to the beginning of the test.

Thirdly, stress testing is performed to assess the reliability and resilience of the system when the limits of normal operation are exceeded. This type of testing is performed to determine the reliability of the system during extreme or inconsistent loads and answers questions about the satisfactory performance of the system in the event that the current load greatly exceeds the expected maximum. Typically, stress testing is better at detecting persistence, availability, and exception handling by the system under heavy load than what is considered correct behavior under normal conditions.

Finally, configuration testing is performed to test the performance not of the system in terms of the applied load but in terms of the performance impact of configuration changes. In accordance with the recent literature, it is stated that “it helps faster deployment of Web applications, and applications are more efficient in usage” (Jailia et al., 2018, p. 242). An example of such testing would be experimenting with different load balancing techniques. Configuration testing can also be combined with load testing, stress testing, or stability testing.

The field of web services is rapidly developing nowadays, which has led to an increase in the importance of software testing tools. Software testing is a complex process of analyzing a tool to verify and validate the users’ requirements and ensure that it fulfills them appropriately. There are many various testing tools applicable for testing web applications of different sizes, and it is questionable which one of them has the least usage of hardware consumption.

This literature review aims to gather information on software testing tools as it is presented to identify the tool with the least hardware consumption. The reviewed literature includes general information on performance testing technologies and tools, specificities of software testing related to load testing, and a comparison between several particular tools. The literature reviewed provides information on assorted types of applications used for testing different software parameters, including load, stress, security, smoke, unit, acceptance, graphical user interface, performance, and so-called “gorilla testing” (Pradeep & Sharma, 2019). Each of the described parameters is important for an application to work correctly, meaning that it requires the usage of multiple tools to make a thorough test.

Although some tools can only test a single parameter, many of them have several functions and key operations, making them able to test different parameters (Pradeep & Sharma, 2019). For instance, Grinder is a tool specifically created for load testing, while Apache JMeter is applicable for testing load and performance (Pradeep & Sharma, 2019). Several other tools described in the reviewed sources, such as Pylot, have up to five functions useful for software testing (Pradeep & Sharma, 2019).

The significant number of various parameters tested by the corresponding software tools implies that it is crucial to understand the essence of those parameters separately, and the reviewed literature provides detailed information on it. Thus, the load is related to the software behavior while accessed by multiple users simultaneously, while stress testing implies software robustness analysis (Pradeep & Sharma, 2019). Security testing is responsible for vulnerabilities of the product, smoke testing ensures proper functioning of critical application features, and unit testing is associated with the individual behavior of each separate unit (Pradeep & Sharma, 2019). Then, testing of acceptance, graphical user interface, and “gorilla” applies for a deeper analysis of internal functions of the software (Pradeep & Sharma, 2019).

Finally, performance testing is the most complicated form of software testing, which “involves the evaluation of the software product on multi-dimensional aspects including Speed, Load, Traffic, Stress, vulnerability, and many others” (Pradeep & Sharma, 2019, p. 399). Furthermore, the literature reviewed includes a description of various challenges related to software testing that make the process much more complicated than traditional systems. According to Ibrahim and Fadlallah (2018), such complexity can be explained by two primary reasons: the complicated nature of web services and the limitations associated with service-oriented architecture. Additionally, web services have a distributed nature because of multiple protocols and limited Web Service Description Language (Ibrahim & Fadlallah, 2018).

Load Testing

Load testing is a common form of software testing and is usually the first step taken in the corresponding process. Shrivastava and Prapulla (2020) define load testing as “a type of non-functional testing to recognize an application’s actions when it is swarmed with a large number of user hits” (p. 3392). In order to handle the load of users who are using an application simultaneously, it is critical to analyze the capacity of the tested software before the actual delivery of the program to users (Pradeep & Sharma, 2019). As mentioned earlier, testing tools may be multifunctional, and many of them can perform load testing, but some particular tools, such as Loadrunner, are specifically designed to analyze user load (Shrivastava & Prapulla, 2020).

Additionally, there is a study related to usage rate of various web application testing tools aimed to investigate how many people use one particular tool or the other. The study contains the corresponding information on such tools for load testing as Apache JMeter and Loadrunner, claiming that 80% of the people questioned use JMeter, while only 10% prefer Loadrunner (Abbas et al., 2017). However, Loadrunner is functional with more various scripting languages, including Javascript, Citrix, ANSI C, and Net (Abbas et al., 2017). At the same time, Apache JMeter can only be applied in two scripting languages – Javascript and Beanshell, meaning that Loadrunner has more scripting options to work with, and is a more variative testing tool. In terms of plagin support, both tools are similar enough – they have several plugins raising their testing capabilities (Abbas et al., 2017). These comparison results demonstrate that the people’f preference of using Apache JMeter is not easily explainable since Loadrunner does not seem to be a less efficient testing tool.

The reviewed literature contains results of various experimental tests of the tools associated with load testing. Researchers claim that load testing is a complicated process aimed to explore several indicators related to the loaded environment, such as throughput and response time (Shrivastava & Prapulla, 2020). The results of the corresponding experiments show that various tools for load testing, such as Loadrunner, are efficient in finding potential bugs, locating and investigating the software defects (Shrivastava & Prapulla, 2020). In addition, such tools apply random models of users’ behaviors while testing the load of a web application, which helps conduct realistic load models for future analysis (Shrivastava & Prapulla, 2020). Locust has also proven to perform at high-efficiency rates and as “an easy-to-use and easy-to-configure system in terms of load testing, according to the results of the recent studies (Shrivastava & Prapulla, 2020).

Apache JMeter is a tool for load and performance testing. It provides an analysis of various software protocols of the application, further presenting the in-depth audit of them (Pradeep & Sharma, 2019). A similar Python-based testing tool, Locust, can also evaluate the behavior of a web application while multiple concurrent users are accessing it (Pradeep & Sharma, 2019). Locust generates artificial traffic connected to web service, which helps investigate its potential performance (Pradeep & Sharma, 2019). Additionally, Locust has some key features, such as its platform independence, ability to analyze standalone and live websites, and many others (Pradeep & Sharma, 2019). Loadrunner works similarly, simulating artificial traffic while collecting information on key components of infrastructure, such as web servers or database servers (Ibrahim & Fadlallah, 2018).

Stress Testing

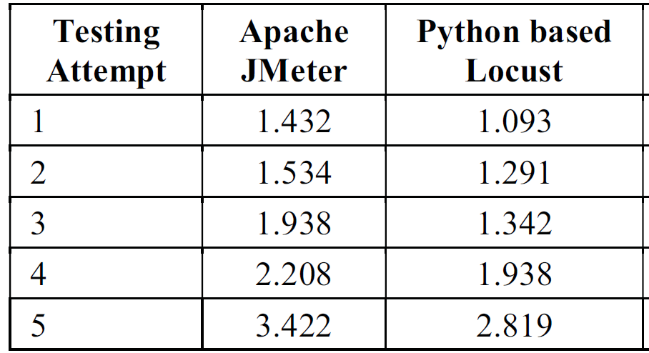

Researchers have used several criteria to compare different testing tools, including usability and performance. Although JMeter and Locust have demonstrated relatively similar usability test results, the results of performance testing significantly differ (Ibrahim & Fadlallah, 2018). According to them, Apache JMeter has shown faster response time on low numbers of concurrent users and higher overall throughput than Locust. However, with the growth of the number of users accessing the web application simultaneously, the response time of Locust becomes significantly faster compared to JMeter (see Table 1: The Results of Tools Testing).

In addition, there is a study comparing JMeter and Locust in terms of web application testing tools. According to researchers, Apache JMeter shows significantly higher indicators of average response time based on the results of 10 similar experiments (Ibrahim & Fadlallah, 2018). The results of all the experiments have proven that the average response time for JMeter can rise up to 1000 units, while Locust never exceeds 10-20 units (Ibrahim & Fadlallah, 2018). That part of the tools’ comparison illustrates that Locust is exceptionally better in terms of average response time because the testing application is many times faster when responding to various requests.

A study that evaluated a number of web testing tools, including Apache’s JMeter and Locust, was able to submit similar workloads to both tools in order to observe execution time, memory overflow, and consumption. Locust was able to consistently provide lower execution times for an identical workload as JMeter, though the differences were not excessive (Sharma & Kumar, 2020). Although both web-testing tools proved to be able to manage high workloads of concurrent users when testing small to medium-sized web client applications, both have noticeable and unique drawbacks. These web applications are projects that require not more than 1000 hours of word and a team of 5 experts (Pradeep & Sharma, 2019). In terms of the least usage of memory consumption during testing, Locust tends to rate over JMeter (Table 2).

Gaps: Comparison of the Research Findings

All studies addressed in this literature review converge on one central point: software testing tools play a significant role in the modern software industry. Web applications are a matter of high importance due to their distribution worldwide because almost every domain of human life has an online form nowadays (Jailia et al., 2018). Most reviewed studies claim that the absence of appropriate testing is associated with several negative consequences, such as abnormal behavior of an application or its complete failure because of crashed inputs. The active research in this field aims to fill critical gaps in the corresponding knowledge since there are currently no particular guidelines or parameters that can help identify the most efficient tools. As mentioned earlier, there is a large amount of web testing tools already developed which mostly have similar functions. The high significance rate of web application testing explains why it is essential to perform all the tests correctly, which causes the necessity to determine which tools are more effective in terms of their functions. Although the reviewed literature attempts to provide the mentioned guidelines, it is still challenging to conduct an adequate comparison.

Furthermore, there are specific differences in various studies related to results of certain calculations, such as average response time, total execution time, or throughput. For instance, Shrivastava and Propulla (2020) report that Apache JMeter and Locust have almost the exact average response time (around 10 units). At the same time, Ibrahim and Fadlallah (2018) state that JMeter’s average response time is significantly higher and can rise up to 984 units, while the same indicator of Locust does not exceed 18 units. Such differences prove that there are some uncertainties related to actual technical parameters of various testing tools, and a deeper study should be conducted on the subject.

The discussed studies apply the comparative methodology to determine which testing tools are more efficient with different parameters given. However, the most significant gap in the investigated research is the low scope of comparison since it is primarily conducted between two particular testing tools. Although the corresponding results demonstrate tools’ efficiency per various parameters, it is still challenging to identify the most effective tool among multiple variables. Some studies compare many tools at once by dividing them into different groups, but it still does not provide sufficient data to make a choice in specific situations. Nonetheless, if the variables include a small number of testing tools to choose from, then the reviewed studies can be applied. For instance, if there is a necessity to identify which testing tool is more appropriate for solving a particular task – JMeter or Locust, one can use the reviewed studies to make a decision based on the comparison.

Conclusion

The reviewed literature contains thorough analysis and detailed information on many various tools for testing software. However, there appear to be too many such tools, and it is challenging to compare them all in terms of their performance efficiency. Furthermore, the reviewed studies only analyze the functional capabilities of the testing tools, while information on their performance based on hardware use is absent. That makes it challenging to define the testing tool with the least memory consumption. Thus, it is advisable to conduct a deep investigation of the tools’ hardware requirements and the corresponding performance outcomes. Creating a simultaneous comparative study of multiple tools is also necessary to identify the most efficient software testing tool.

References

Abbas, R., Sultan, Z., & Bhatti, S. N. (2017, April). Comparative analysis of automated load testing tools: Apache jmeter, microsoft visual studio (tfs), loadrunner, siege. In 2017 International Conference on Communication Technologies (ComTech) (pp. 39-44). IEEE.

Jailia, M., Agarwal, M., & Kumar, A. (2018). Comparative study of N-tier and cloud-based web application using automated load testing tool. Advances in Intelligent Systems and Computing, 625, 239–250. Web.

Kar, A., & Corcoran, P. (2019). GazeVisual: A practical software tool and web application for performance evaluation of eye tracking systems. IEEE Transactions on Consumer Electronics, 65(3), 293–302. Web.

Ibrahim, O. I. T. A., & Fadlallah, H. (2018). A comparative study of web service testing tools: Apache JMeter & Locust. Master’s thesis, University of Science & Technology.

Pradeep, S., & Sharma, Y. K. (2019, February). A pragmatic evaluation of stress and performance testing technologies for web-based applications. In 2019 Amity International Conference on Artificial Intelligence (AICAI) (pp. 399-403). IEEE.

Shrivastava, S., & Prapulla, S. B. (2020). Comprehensive review of load testing tools. International Research Journal of Engineering and Technology, 7(5), 3391-3395.

Table 1: The Results of Tools Testing

Table 2: Comparison of Apache JMeter and Locust in testing on the parameter of execution time in seconds